- Hanya AMD yang menggerakkan seluruh spektrum AI, menggabungkan GPU, CPU, jaringan, dan open software terkemuka untuk memberikan fleksibilitas dan kinerja yang tak tertandingi —

- Meta, OpenAI, xAI, Oracle, Microsoft, Cohere, HUMAIN, Red Hat, Astera Labs, dan Marvell membahas bagaimana mereka bermitra dengan AMD untuk solusi AI —

SANTA CLARA, California, 12 Juni 2025 (GLOBE NEWSWIRE) — AMD (NASDAQ: AMD)

menyampaikan visi platform AI terintegrasi yang komprehensif dan end-to-end serta memperkenalkan infrastruktur AI rak-skala terbuka dan dapat diskalakan yang dibangun berdasarkan standar industri di acara Advancing AI 2025.

AMD dan mitranya menampilkan:

Bagaimana mereka membangun ekosistem AI terbuka dengan akselerator AMD Instinct™ MI350 Series

SeriesPertumbuhan berkelanjutan dari ekosistem AMD ROCm™

Desain rak-skala baru bertenaga dan roadmap perusahaan yang membawa kinerja AI rak-skala terdepan melampaui 2027

“AMD mendorong inovasi AI dengan kecepatan yang belum pernah terjadi sebelumnya, yang ditandai dengan peluncuran akselerator seri AMD Instinct MI350 kami, kemajuan dalam solusi rak-skala generasi berikutnya AMD ‘Helios’, dan momentum yang terus berkembang untuk tumpukan open software ROCm kami,” kata Dr. Lisa Su, AMD chair and CEO. “Kami memasuki fase berikutnya dari AI, didorong oleh standar terbuka, inovasi bersama, dan kepemimpinan AMD yang semakin berkembang di seluruh ekosistem mitra hardware dan software yang berkolaborasi untuk mendefinisikan masa depan AI.”

AMD Menyediakan Solusi Kepemimpinan untuk Mempercepat Ekosistem AI Terbuka

AMD mengumumkan portofolio luas hardware, software dan solusi untuk menggerakkan seluruh spektrum AI:

AMD memperkenalkan GPU Instinct MI350 Series, menetapkan tolok ukur baru untuk kinerja, efisiensi, dan skalabilitas dalam AI generatif dan komputasi berkinerja tinggi. MI350 Series, yang terdiri dari GPU dan platform Instinct MI350X dan MI355X, memberikan peningkatan komputasi AI 4x, generasi ke generasii dan lompatan inferensi generasi 35xii, membuka jalan bagi solusi AI transformatif di berbagai industri. MI355X juga memberikan peningkatan harga-kinerja yang signifikan, menghasilkan hingga 40% lebih banyak token-per-dolar dibandingkan dengan solusi pesaingiii. Selengkapnya tersedia dalam blog ini dari Vamsi Boppana, SVP AI AMD.

AMD mendemonstrasikan infrastruktur AI skala rak standar terbuka dan menyeluruh—sudah diluncurkan dengan akselerator AMD Instinct MI350 Series, prosesor AMD EPYC™ Generasi Kelima, dan NIC AMD Pensando™ Pollara dalam penerapan hyperscaler seperti Oracle Cloud Infrastructure (OCI) dan ditetapkan untuk ketersediaan luas pada paruh kedua tahun 2025.

AMD juga melakukan pratinjau rak AI generasi berikutnya yang disebut “Helios.” Rak ini akan dibangun pada GPU AMD Instinct MI400 Series generasi berikutnya – yang dibandingkan dengan generasi sebelumnya diharapkan memberikan kinerja hingga 10x lebih baik saat menjalankan inferensi pada model Mixture of Expertsiv, CPU AMD EPYC “Venice” berbasis “Zen 6”, dan NIC AMD Pensando “Vulcano”. Detail selengkapnya tersedia di posting blog ini.

Versi terbaru dari tumpukan software open source AI AMD, ROCm 7, dirancang untuk memenuhi tuntutan yang terus meningkat akan beban kerja AI generatif dan komputasi performa tinggi—sementara secara dramatis meningkatkan pengalaman pengembang secara menyeluruh. ROCm 7 menghadirkan dukungan yang ditingkatkan untuk kerangka kerja standar industri, kompatibilitas hardware yang diperluas, serta alat pengembangan, driver, API, dan pustaka baru untuk mempercepat pengembangan dan penerapan AI. Detail selengkapnya tersedia dalam posting blog ini dari Anush Elangovan, AMD CVP Pengembangan Sofware AI.

Instinct MI350 Series melampaui target lima tahun AMD untuk meningkatkan efisiensi energi pelatihan AI dan node komputasi performa tinggi hingga 30x, yang pada akhirnya menghasilkan peningkatan 38xv. AMD juga meluncurkan target 2030 baru untuk memberikan peningkatan 20x dalam efisiensi energi skala rak dari tahun dasar 2024vi, yang memungkinkan model AI umum yang saat ini memerlukan lebih dari 275 rak untuk dilatih dalam kurang dari satu rak yang digunakan sepenuhnya pada tahun 2030, dengan menggunakan listrik 95% lebih sedikitvii. Detail selengkapnya tersedia dalam posting blog ini dari Sam Naffziger, AMD SVP dan Corporate Fellow.

AMD juga mengumumkan ketersediaan luas AMD Developer Cloud untuk komunitas pengembang global dan open-source. Dirancang khusus untuk pengembangan AI yang cepat dan performa tinggi, pengguna akan memiliki akses ke lingkungan cloud yang dikelola sepenuhnya dengan berbagai alat dan fleksibilitas untuk memulai proyek AI – dan berkembang tanpa batas. Dengan ROCm 7 dan AMD Developer Cloud, AMD menurunkan hambatan dan memperluas akses ke komputasi generasi berikutnya. Kolaborasi strategis dengan para pemimpin seperti Hugging Face, OpenAI, dan Grok membuktikan kekuatan solusi terbuka yang dikembangkan bersama.

Ekosistem Mitra Luas Menampilkan Kemajuan AI Bertenaga AMD

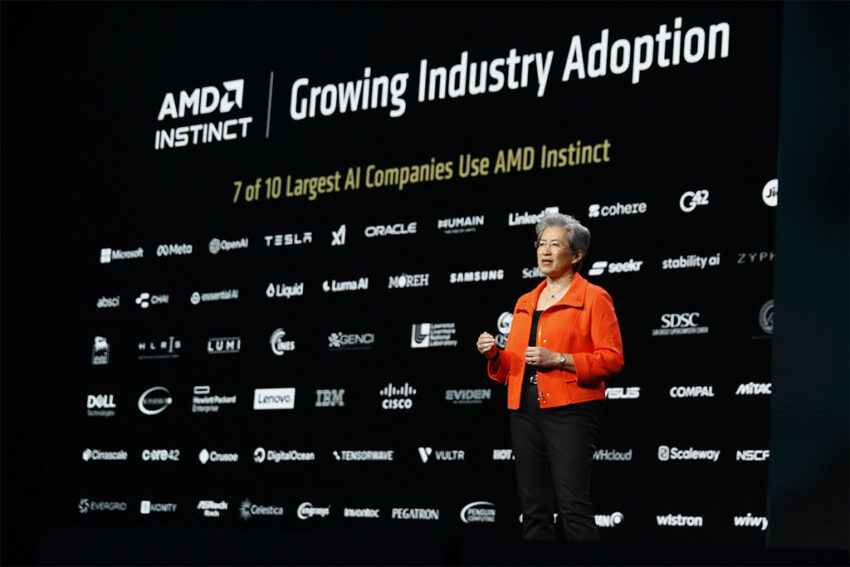

Saat ini, tujuh dari 10 pembuat model dan perusahaan AI terbesar menjalankan beban kerja produksi pada akselerator Instinct. Di antara perusahaan-perusahaan tersebut adalah Meta, OpenAI, Microsoft, dan xAI, yang bergabung dengan AMD dan mitra lainnya di Advancing AI, untuk membahas cara mereka bekerja dengan AMD untuk solusi AI guna melatih model AI terkemuka saat ini, mendukung inferensi dalam skala besar, dan mempercepat eksplorasi serta pengembangan AI:

Meta merinci bagaimana Instinct MI300X digunakan secara luas untuk inferensi Llama 3 dan Llama 4. Meta berbagi kegembiraan untuk MI350 dan daya komputasinya, kinerja per TCO, dan memori generasi berikutnya. Meta terus berkolaborasi erat dengan AMD pada peta jalan AI, termasuk rencana untuk platform Instinct Seri MI400.

CEO OpenAI Sam Altman membahas pentingnya perangkat keras, perangkat lunak, dan algoritme yang dioptimalkan secara holistik dan kemitraan erat OpenAI dengan AMD pada infrastruktur AI, dengan penelitian dan model GPT pada Azure dalam produksi pada MI300X, serta keterlibatan desain yang mendalam pada platform MI400Series.

Oracle Cloud Infrastructure (OCI) adalah salah satu pemimpin industri pertama yang mengadopsi infrastruktur AI skala rak terbuka AMD dengan GPU AMD Instinct MI355X. OCI memanfaatkan CPU dan GPU AMD untuk menghadirkan performa yang seimbang dan terukur untuk kluster AI, dan mengumumkan akan menawarkan kluster AI zettascale yang dipercepat oleh prosesor AMD Instinct terbaru dengan hingga 131.072 GPU MI355X untuk memungkinkan pelanggan membangun, melatih, dan menyimpulkan AI dalam skala besar.

HUMAIN membahas perjanjian penting dengan AMD untuk membangun infrastruktur AI yang terbuka, terukur, tangguh, dan hemat biaya dengan memanfaatkan spektrum penuh platform komputasi yang hanya dapat disediakan AMD.

Microsoft mengumumkan Instinct MI300X kini mendukung model proprietary dan open-source dalam produksi di Azure.

Cohere menyampaikan bahwa model Command berkinerja tinggi dan terukurnya diterapkan pada Instinct MI300X, mendukung inferensi LLM tingkat perusahaan dengan throughput tinggi, efisiensi, dan privasi data.

Red Hat menjelaskan bagaimana kolaborasi yang diperluas dengan AMD memungkinkan lingkungan AI yang siap produksi, dengan GPU AMD Instinct pada Red Hat OpenShift AI yang menghadirkan pemrosesan AI yang kuat dan efisien di seluruh lingkungan cloud hybrid.

Astera Labs menyoroti bagaimana ekosistem UALink terbuka mempercepat inovasi dan memberikan nilai yang lebih besar kepada pelanggan dan berbagi rencana untuk menawarkan portofolio produk UALink yang komprehensif untuk mendukung infrastruktur AI generasi berikutnya.

Marvell bergabung dengan AMD untuk menyoroti kolaborasinya sebagai bagian dari Konsorsium UALink yang mengembangkan interkoneksi terbuka, yang menghadirkan fleksibilitas terbaik untuk infrastruktur AI.

Sumber Pendukung

Pelajari selengkapnya tentang acara ini di sini.

Akses perlengkapan pers AAI 2025 di sini.

Pelajari selengkapnya tentang solusi AI AMD di sini.

Terhubung dengan AMD di Linkedin

Tentang AMD

Selama lebih dari 55 tahun, AMD telah mendorong inovasi dalam komputasi berperforma tinggi, grafik, dan teknologi visualisasi. Ratusan juta konsumen, bisnis Fortune 500, dan fasilitas penelitian ilmiah terkemuka di seluruh dunia mengandalkan teknologi AMD untuk meningkatkan cara mereka hidup, bekerja, dan bermain. Karyawan AMD berfokus pada pengembangan produk berperforma tinggi dan adaptif yang melampaui batas-batas yang mungkin. Untuk informasi lebih lanjut tentang bagaimana AMD memungkinkan masa kini dan menginspirasi masa depan, kunjungi www.amd.com.

Cautionary Statement

This press release contains forward-looking statements concerning Advanced Micro Devices, Inc. (AMD) such as the features, functionality, performance, availability, timing and expected benefits of AMD products and roadmaps; AMD’s AI platform; and AMD’s partner ecosystem, which are made pursuant to the Safe Harbor provisions of the Private Securities Litigation Reform Act of 1995. Forward-looking statements are commonly identified by words such as “would,” “may,” “expects,” “believes,” “plans,” “intends,” “projects” and other terms with similar meaning. Investors are cautioned that the forward-looking statements in this press release are based on current beliefs, assumptions and expectations, speak only as of the date of this press release and involve risks and uncertainties that could cause actual results to differ materially from current expectations. Such statements are subject to certain known and unknown risks and uncertainties, many of which are difficult to predict and

generally beyond AMD’s control, that could cause actual results and other future events to differ materially from those expressed in, or implied or projected by, the forward-looking information and statements. Material factors that could cause actual results to differ materially from current expectations include, without limitation, the following: Intel Corporation’s dominance of the microprocessor market and its aggressive business practices; Nvidia’s dominance in the graphics processing unit market and its aggressive business practices; competitive markets in which AMD’s products are sold; the cyclical nature of the semiconductor industry; market conditions of the industries in which AMD products are sold; AMD’s ability to introduce products on a timely basis with expected features and performance levels; loss of a significant customer; economic and market uncertainty; quarterly and seasonal sales patterns; AMD’s ability to adequately protect its technology or other intellectual property; unfavorable currency exchange rate fluctuations; ability of third party manufacturers to manufacture AMD’s products on a timely basis in sufficient quantities and using competitive technologies; availability of essential equipment, materials, substrates or manufacturing processes; ability to achieve expected manufacturing yields for AMD’s products; AMD’s ability to generate revenue from its semi-custom SoC products; potential security vulnerabilities; potential security incidents including IT outages, data loss, data breaches and cyberattacks; uncertainties involving the ordering and shipment of AMD’s products; AMD’s reliance on third-party intellectual property to design and introduce new products; AMD’s reliance on third-party companies for design, manufacture and supply of motherboards, software, memory and other computer platform components; AMD’s reliance on Microsoft and other software vendors’ support to design and develop software to run on AMD’s products; AMD’s reliance on third-party distributors and add-in-board partners; impact of modification or interruption of AMD’s internal business processes and information systems; compatibility of AMD’s products with some or all industry-standard software and hardware; costs related to defective products; efficiency of AMD’s supply chain; AMD’s ability to rely on third party supply-chain logistics functions; AMD’s ability to effectively control sales of its products on the gray market; long-term impact of climate change on AMD’s business; impact of government actions and regulations such as export regulations, tariffs and trade protection measures, and licensing requirements; AMD’s ability to realize its deferred tax assets; potential tax liabilities; current and future claims and litigation; impact of environmental laws, conflict minerals related provisions and other laws or regulations; evolving expectations from governments, investors, customers and other stakeholders regarding corporate responsibility matters; issues related to the responsible use of AI; restrictions imposed by agreements governing AMD’s notes, the guarantees of Xilinx’s notes, the revolving credit agreement and the ZT Systems credit agreement; impact of acquisitions, joint ventures and/or strategic investments on AMD’s business and AMD’s ability to integrate acquired businesses, including ZT Systems; AMD’s ability to sell the ZT Systems manufacturing business; impact of any impairment of the combined company’s assets; political, legal and economic risks and natural disasters; future impairments of technology license purchases; AMD’s ability to attract and retain qualified personnel; and AMD’s stock price volatility. Investors are urged to review in detail the risks and uncertainties in AMD’s Securities and Exchange Commission filings, including but not limited to AMD’s most recent reports on Forms 10-K and 10-Q.

AMD, the AMD Arrow logo, EPYC, AMD CDNA, AMD Instinct, Pensando, ROCm, Ryzen, and combinations thereof are trademarks of Advanced Micro Devices, Inc. Other names are for informational purposes only and may be trademarks of their respective owners.

i Based on calculations by AMD Performance Labs in May 2025, to determine the peak theoretical precision performance of eight (8) AMD Instinct™ MI355X and MI350X GPUs (Platform) and eight (8) AMD Instinct MI325X, MI300X, MI250X and MI100 GPUs (Platform) using the FP16, FP8, FP6 and FP4 datatypes with Matrix. Server manufacturers may vary configurations, yielding different results. Results may vary based on use of the latest drivers and optimizations.

MI350-004

iiMI350-044: Based on AMD internal testing as of 6/9/2025. Using 8 GPU AMD Instinct™ MI355X Platform measuring text generated online serving inference throughput for Llama 3.1-405B chat model (FP4) compared 8 GPU AMD Instinct™ MI300X Platform performance with (FP8). Test was performed using input length of 32768 tokens and an output length of 1024 tokens with concurrency set to best available throughput to achieve 60ms on each platform, 1 for MI300X (35.3ms) and 64ms for MI355X platforms (50.6ms). Server manufacturers may vary configurations, yielding different results. Performance may vary based on use of latest drivers and optimizations.

- Based on performance testing by AMD Labs as of 6/6/2025, measuring the text generated inference throughput on the LLaMA 3.1-405B model using the FP4 datatype with various combinations of input, output token length with AMD Instinct™ MI355X 8x GPU, and published results for the NVIDIA B200 HGX 8xGPU. Performance per dollar calculated with current pricing for NVIDIA B200 available from Coreweave website and expected Instinct MI355X based cloud instance pricing. Server manufacturers may vary configurations, yielding different results. Performance may vary based on use of latest drivers and optimizations. Current customer pricing as of June 10, 2025, and subject to change. MI350- 049

- MI400-001: Performance projection as of 06/05/2025 using engineering estimates based on the design of a future AMD Instinct MI400 Series GPU compared to the Instinct MI355x, with 2K and 16K prefill with TP8, EP8 and projected inference performance, and using a GenAI training model evaluated with GEMM and Attention algorithms for the Instinct MI400 Series. Results may vary when products are released in market. (MI400-001)

- EPYC-030a: Calculation includes 1) base case kWhr use projections in 2025 conducted with Koomey Analytics based on available research and data that includes segment specific projected 2025 deployment volumes and data center power utilization effectiveness (PUE) including GPU HPC and machine learning (ML) installations and 2) AMD CPU and GPU node power consumptions incorporating segment-specific utilization (active vs. idle) percentages and multiplied by PUE to determine actual total energy use for calculation of the performance per Watt. 38x is calculated using the following formula: (base case HPC node kWhr use projection in 2025 * AMD 2025 perf/Watt improvement using DGEMM and TEC +Base case ML node kWhr use projection in 2025 *AMD 2025 perf/Watt improvement using ML math and TEC) /(Base case projected kWhr usage in 2025). For more information, https://www.amd.com/en/corporate/corporate-responsibility/data-center-sustainability .html.

- AMD based advanced racks for AI training/inference in each year (2024 to 2030) based on AMD roadmaps, also examining historical trends to inform rack design choices and technology improvements to align projected goals and historical trends. The 2024 rack is based on the MI300X node, which is comparable to the Nvidia H100 and reflects current common practice in AI deployments in 2024/2025 timeframe. The 2030 rack is based on an AMD system and silicon design expectations for that time frame. In each case, AMD

specified components like GPUs, CPUs, DRAM, storage, cooling, and communications, tracking component and defined rack characteristics for power and performance.

Calculations do not include power used for cooling air or water supply outside the racks but do include power for fans and pumps internal to the racks.

Performance improvements are estimated based on progress in compute output (delivered, sustained, not peak FLOPS), memory (HBM) bandwidth, and network (scale-up) bandwidth, expressed as indices and weighted by the following factors for training and inference.

| Training | FLOPS | HBM BW | Scale-up BW |

| Inference | 70.0% | 10.0% | 20.0% |

| 45.0% | 32.5% | 22.5% |

Performance and power use per rack together imply trends in performance per watt over time for training and inference, then indices for progress in training and inference are weighted 50:50 to get the final estimate of AMD projected progress by 2030 (20x). The performance number assumes continued AI model progress in exploiting lower precision math formats for both training and inference which results in both an increase in effective FLOPS and a reduction in required bandwidth per FLOP.

- AMD estimated the number of racks to train a typical notable AI model based on EPOCH AI data (https://epoch.ai). For this calculation we assume, based on these data, that a typical model takes 1025 floating point operations to train (based on the median of 2025 data), and that this training takes place over 1 month. FLOPs needed = 10^25 FLOPs/(seconds/month)/Model FLOPs utilization (MFU) = 10^25/(2.6298*10^6)/0.6. Racks

= FLOPs needed/(FLOPS/rack in 2024 and 2030). The compute performance estimates from the AMD roadmap suggests that approximately 276 racks would be needed in 2025 to train a typical model over one month using the MI300X product (assuming 22.656 PFLOPS/rack with 60% MFU) and <1 fully utilized rack would be needed to train the same model in 2030 using a rack configuration based on an AMD roadmap projection. These calculations imply a >276-fold reduction in the number of racks to train the same model over this six-year period. Electricity use for a MI300X system to completely train a defined 2025 AI model using a 2024 rack is calculated at ~7GWh, whereas the future 2030 AMD system could train the same model using ~350 MWh, a 95% reduction. AMD then applied carbon intensities per kWh from the International Energy Agency World Energy Outlook 2024 [https://www.iea.org/reports/world-energy-outlook-2024]. IEA’s stated policy case gives carbon intensities for 2023 and 2030. We determined the average annual change in intensity from 2023 to 2030 and applied that to the 2023 intensity to get 2024 intensity (434 CO2 g/kWh) versus the 2030 intensity (312 CO2 g/kWh). Emissions for the 2024 baseline scenario of 7 GWh x 434 CO2 g/kWh equates to approximately 3000 metric tC02, versus the future 2030 scenario of 350 MWh x 312 CO2 g/kWh equates to around100 metric tCO2.

Contact:

Brandi Martina

AMD Communications

(512) 705-1720

Liz Stine

AMD Investor Relations

+1 720-652-3965